One of the pivotal announcements at the recent Sitecore Symposium was the introduction of the API Platform within Sitecore Connect. While webhooks have been a valuable tool for handling integrations and scheduled jobs, they present several challenges when used for more complex and business-critical requirements. These challenges include limitations in delivering authenticated APIs, lower rate limits, lack of queuing strategies, inability to deliver customized responses (e.g., pre-hook webhooks in OrderCloud), etc. The API Platform is built to address these limitations by offering a robust, scalable, and secure solution tailored for business-critical, complex, and high-volume events while delivering additional benefits like better performance, security, and operational efficiency.

Continue reading “Unveiling the API Platform: A Scalable Solution for Modern Integrations”

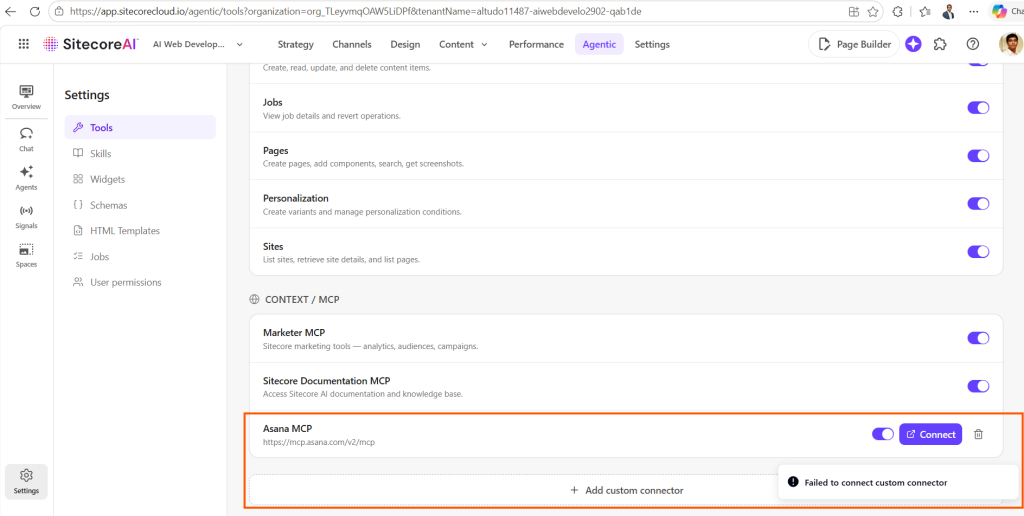

Continue reading “SitecoreAI Agentic Studio: “Failed to connect custom connector” When Adding Custom MCP Connectors? Here’s Why”

Continue reading “SitecoreAI Agentic Studio: “Failed to connect custom connector” When Adding Custom MCP Connectors? Here’s Why”