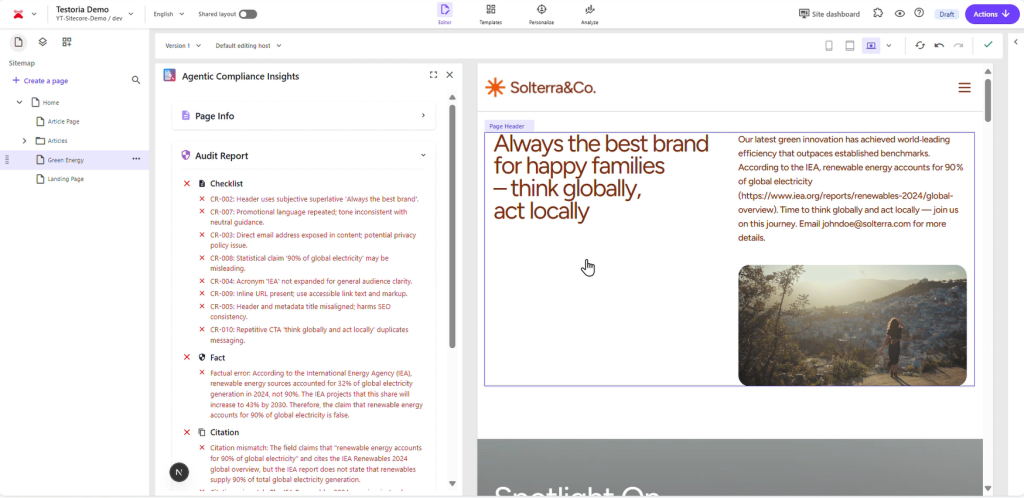

Discover how the open‑source Agentic Compliance Insights module brings generative AI into SitecoreAI to audit and improve your pages.

This post covers the motivation for automating compliance checks, the multi‑agent architecture behind the module, setup instructions, and how you can customize the solution for your own organization.

Continue reading “Agentic Compliance Insights – AI‑powered content governance for SitecoreAI”

Continue reading “Agentic Compliance Insights – AI‑powered content governance for SitecoreAI”